|

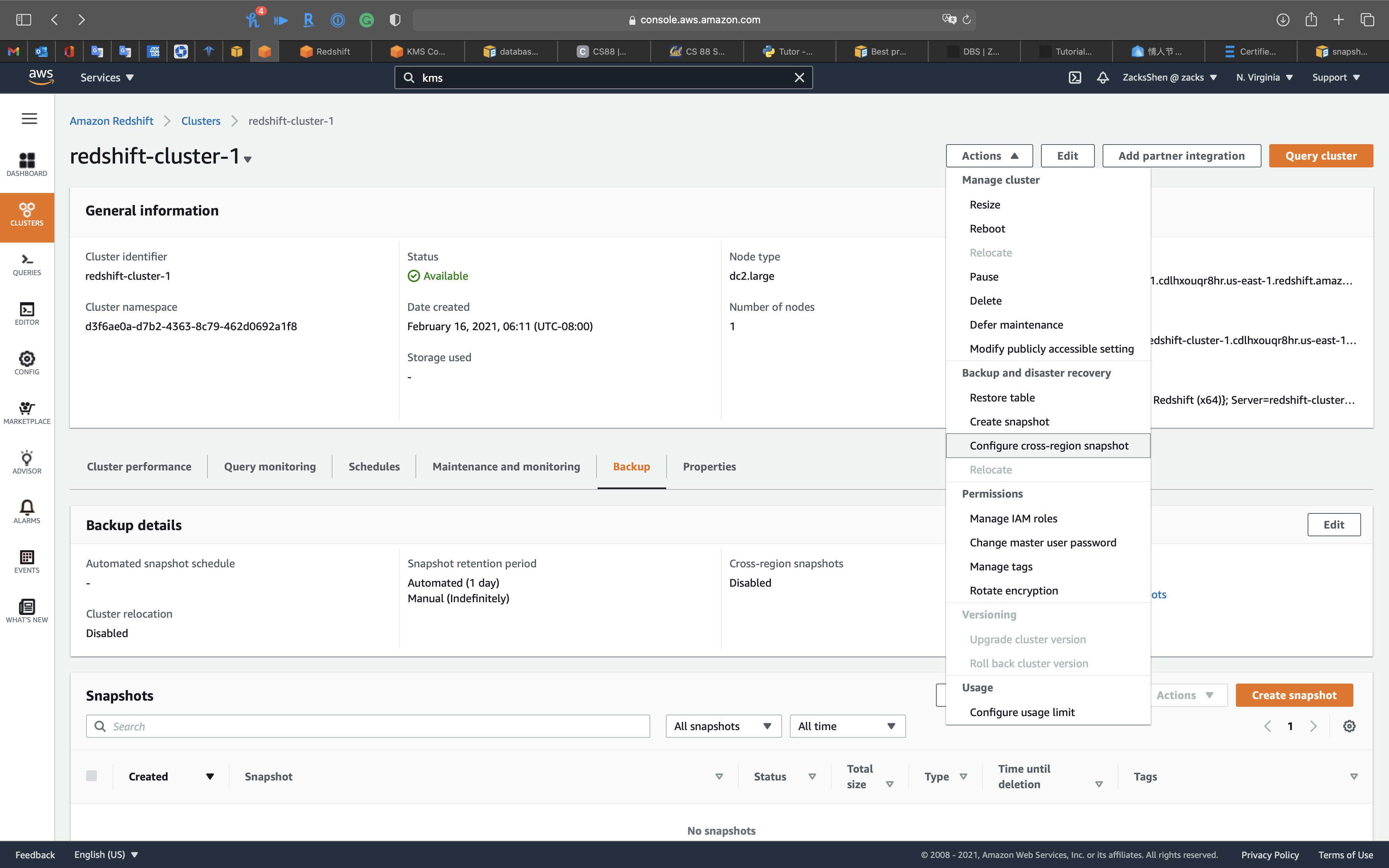

The target table in S3 for the COPY command. Using Dataform’s enriched SQL this is what the code should look like: config To execute the COPY command you need to provide the following values: sqlx file in your project under the definitions/ folder. Ok now you’ve got all that sorted, let’s get started! If you do not already have a cluster set up, see how to launch one here.Ī Dataform project set up which is connected to your Redshift warehouse. In Redshift’s case the limit is 115 characters.Īn Amazon S3 bucket containing the CSV files that you want to import.Ī Redshift cluster. If a column name is longer than the destination’s character limit it will be rejected. Verified that column names in CSV files in S3 adhere to your destination’s length limit for column names. This is required to grant Dataform access to your S3 bucket. Permissions in AWS Identity Access Management (IAM) that allow you to create policies, create roles, and attach policies to roles. Before you begin you need to make sure you have:Īn Amazon Web Services (AWS) account. The COPY command can also be used to load files from other sources e.g. This allows you to load data in parallel from multiple data sources. We’re going to talk about how to import data from Amazon S3 to Amazon Redshift in just a few minutes, using the COPY command. If this is the case and you’re considering using a tool like Dataform to start building out your data stack, then there are some simple scripts you can run to import this data into your cloud warehouse using Dataform. However, often the “root” of your data is in another external source e.g. Currently Dataform integrates with Google BigQuery, Amazon Redshift, Snowflake and Azure Data Warehouse. With Dataform you can automatically manage dependencies, schedule queries and easily adopt engineering best practices with built in version control. I'll recommend Redshift for now since it can address a wider range of use cases, but we could give you better advice if you described your use case in depth.Dataform is a powerful tool for managing data transformations in your warehouse. If you choose Redshift you'll need to ingest the data from your files into it and maybe carry out some tuning tasks for performance gain. In the case you go for Athena you'd also proabably need to change your file format to Parquet or Avro and review your partition strategy depending on your most frequent type of query. In both cases you may need to adapt the data model to fit your queries better.

Once you select the technology you'll need to optimize your data in order to get the queries executed as fast as possible. If performance is not so critical and queries will be predictable somewhat I'd go for Athena.

If performance is a key factor, users are going to execute unpredictable queries and direct and managing costs are not a problem I'd definitely go for Redshift. Amazon EMR has a broader approval, being mentioned in 95 company stacks & 18 developers stacks compared to Amazon Athena, which is listed in 50 company stacks and 18 developer stacks.įirst of all you should make your choice upon Redshift or Athena based on your use case since they are two very diferent services - Redshift is an enterprise-grade MPP Data Warehouse while Athena is a SQL layer on top of S3 with limited performance. Netflix, Medium, and Yelp are some of the popular companies that use Amazon EMR, whereas Amazon Athena is used by Auto Trader, Zola, and Twilio SendGrid. "Use SQL to analyze CSV files" is the top reason why over 9 developers like Amazon Athena, while over 13 developers mention "On demand processing power" as the leading cause for choosing Amazon EMR. Customers launch millions of Amazon EMR clusters every year.Īmazon Athena belongs to "Big Data Tools" category of the tech stack, while Amazon EMR can be primarily classified under "Big Data as a Service". Amazon EMR is used in a variety of applications, including log analysis, web indexing, data warehousing, machine learning, financial analysis, scientific simulation, and bioinformatics. Athena is serverless, so there is no infrastructure to manage, and you pay only for the queries that you run Amazon EMR: Distribute your data and processing across a Amazon EC2 instances using Hadoop. Amazon Athena is an interactive query service that makes it easy to analyze data in Amazon S3 using standard SQL. Amazon Athena vs Amazon EMR: What are the differences?Īmazon Athena: Query S3 Using SQL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed